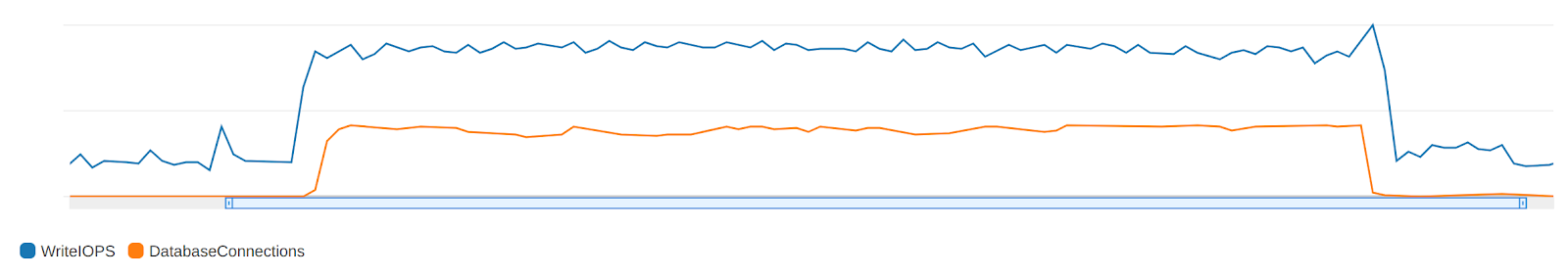

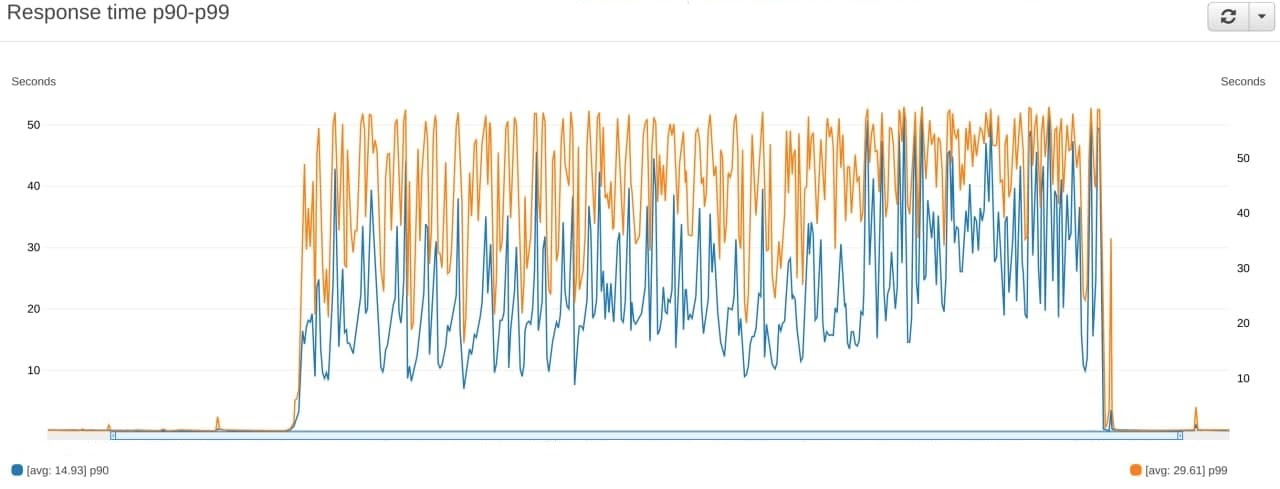

Last night, we experienced a significant increase in the number of requests to certain endpoints of the server API, the logic of which assumed non-trivial changes in data in various tables. Due to this issue, an abnormally large number of operations were performed with one of the tables associated with analytics, which caused a number of locks in queries to the database. This significantly increased the response time from the database (Fig. 1) and, accordingly, from the servers (Fig. 2). As a result, some of the requests (about 30%) did not fit into the timeout and were discarded with an error code. Most likely this error did not affect you.

We can see that all requests came from one account that was only registered recently. As we have established, this account is not a major app publisher, so we consider this incident to be an attack. Based on historical data, we found that attempts were made to test the system’s behavior and performance that did not correspond to any real load, as a result of which the attackers identified the most vulnerable areas of the system. The deliberate nature of this incident is also indicated by the fact that this incident occurred at night, when most of the team was absent from the workplace.

From the start of the incident, we were working hard to solve this problem. In the early morning we localized the abnormal requests and eliminate the malfunctions. Preventive measures were taken against this attack vector and the vulnerability was eradicated.