Welmi

AppDevLabs

Highlights

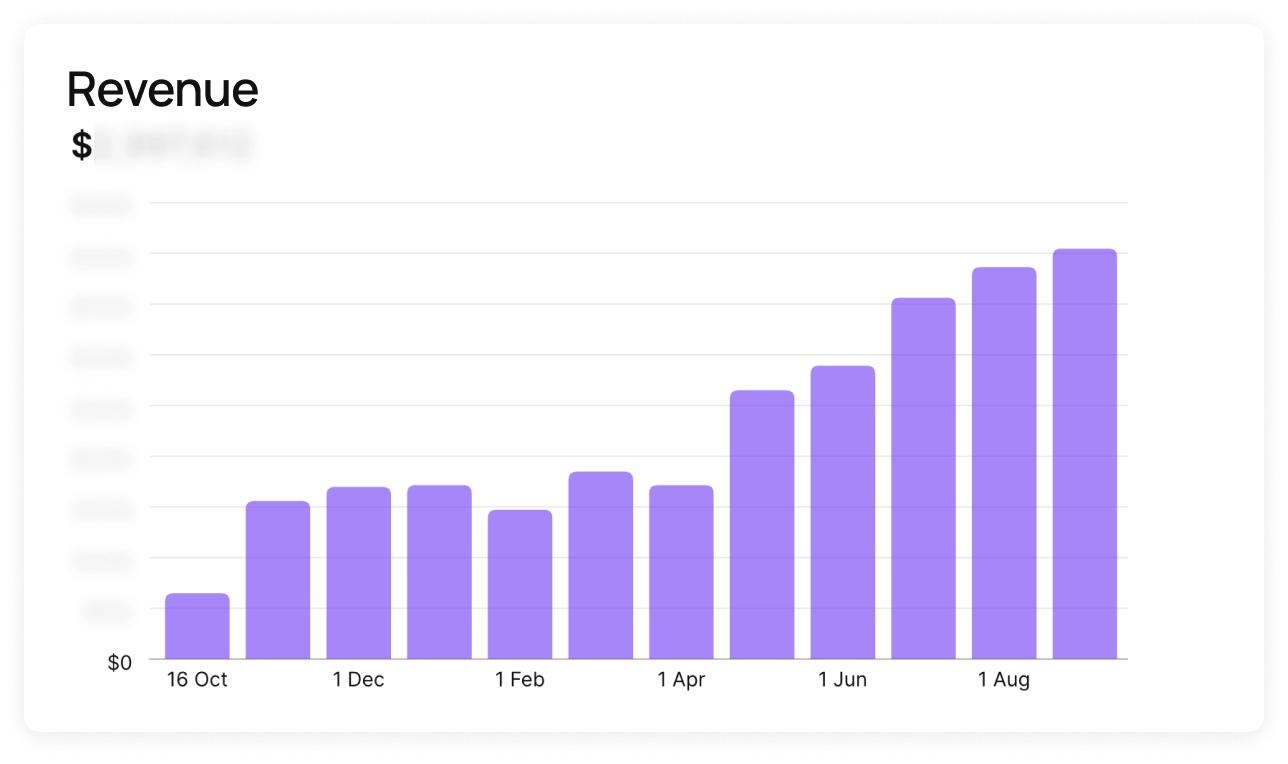

- Weekly subscription revenue grew 300% over 12 months

- Premium conversion grew from 0.4% to ~2% in key markets

- Experiment cadence went from 1 test per quarter to 3+ per month

- 42 paywall variants tested without engineering involvement

With 80 million downloads, Pregnancy+ by Philips is one of the most-used pregnancy apps in the world. It tracks fetal development week by week, pairs that tracking with expert-led video content and midwife Q&As, and gives parents practical tools to prepare for birth.

Like most consumer health apps, Pregnancy+ runs on a freemium model. The free experience covers the essentials. Premium unlocks the full content library, and the team manages localized pricing across 10+ markets globally.

The product had clear value. Converting free users to paid subscribers at the scale the team wanted was a separate problem.

One experiment every two to three months

Before November 2024, the Pregnancy+ growth team ran roughly one paywall experiment per quarter. Each test required engineering involvement, design coordination, and a full App Store release cycle. By the time results arrived, the window to iterate on that learning had closed.

The team had hypotheses and creative direction. The constraint was infrastructure: every idea had to queue behind an engineering sprint before it could be validated.

At that cadence, it was difficult to build meaningful momentum. Losing weeks between a hypothesis and its result meant the team was operating on intuition as much as data.

Removing engineering from the experiment loop

The team adopted Adapty in November 2024. The priority was straightforward: let the growth team run paywall experiments without waiting on a developer.

Adapty gave them no-code paywall management, real-time analytics, and rapid variant deployment. One person owns each experiment end-to-end, collaborating with design and UX research but not gating on engineering time. Self-serve analytics removed the dependency on the data team for experiment readouts.

The team also built discipline into how they ran tests. Each experiment started with a written hypothesis and defined success criteria before anything shipped. They started simple, built momentum on fast wins, and moved to more complex variants once the pattern of learning was established.

Four experiments that drove revenue

The team ran dozens of tests across the 12 months. Four produced the clearest signal.

Free trial vs. intro discount: +140% revenue

The team pitted two variants against each other: a discounted intro price versus a free trial with no upfront cost. The assumption heading into the test was that a lower price would convert better. The data said otherwise.

The free trial variant generated 140% more revenue than the discounted control. Trial opt-in turned out to be a leading indicator of long-term paid conversion. Users who chose to try the product at full value converted at higher rates and retained longer than users acquired through discounting.

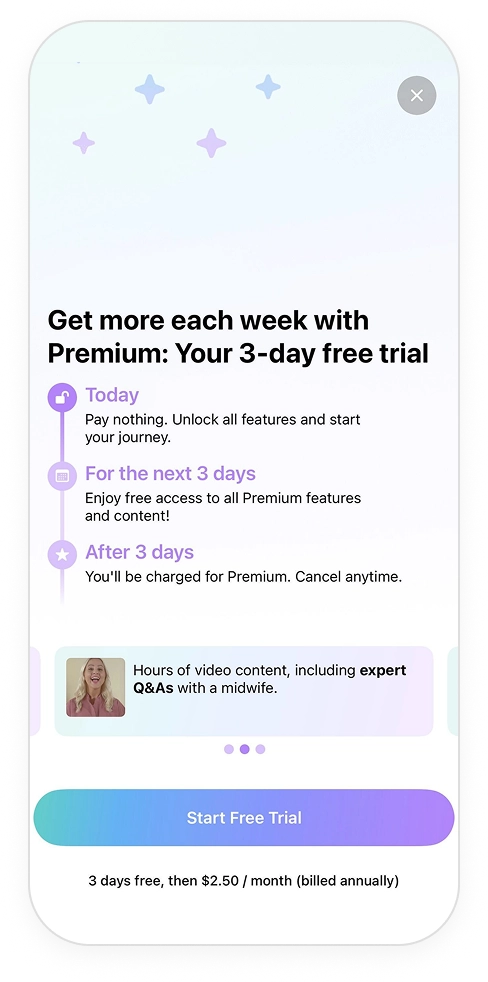

Transparent trial timeline: +27% revenue

The team added a clear visual breakdown of the trial structure to the paywall: what users get today, what happens on day 3, what they are charged after day 3, and when they can cancel. Revenue increased 27% against the control.

The insight was not that users needed more information. Users who feel uncertain about what they are signing up for abandon before converting. Addressing that uncertainty at the paywall, rather than through post-signup emails or support, removed the friction before it became a lost session.

Day 5 promotion: +17% revenue

Rather than showing a promotional offer immediately on install, the team tested surfacing it on day five. Users had explored the app, engaged with core content, and built a sense of value before seeing the conversion prompt.

Revenue was 17% higher than showing the same offer on day one.

On day one, users are still deciding whether the app is worth their time. By day five, that question is answered. The offer at that point confirms a decision they are already moving toward rather than interrupting one they have not made yet.

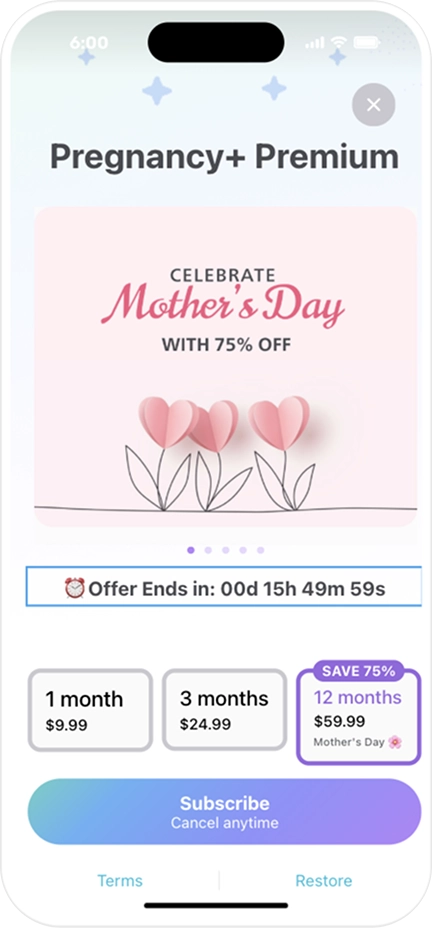

Seasonal campaigns: up to +110% revenue uplift

Alongside continuous experimentation, the team ran time-limited campaigns at three high-intent moments: Black Friday / Cyber Monday (+113% vs prior period), New Year (+53.5%), and Mother’s Day UK (+52%). None of these cannibalized baseline conversion.

The team kept core messaging consistent across all three and adapted the offer frame to the emotional context of each moment. A Black Friday discount is a different purchase decision from a New Year motivation push, which is different again from a Mother’s Day gift. Matching the message to the moment, rather than running a blanket sale, produced the uplift.

The results

Weekly subscription revenue grew 300% over the 12 months tracked in Adapty. Premium conversion grew from 0.4% to approximately 2% in top-performing markets, with iOS running above that average. Monthly retention reached 65% at the second renewal — a figure the team attributes to the quality of trial-to-paid users compared with discount-acquired cohorts.

Experiment cadence went from one test every two to three months to three or more per month. That compression is the underlying driver. More tests mean faster signals, and faster signals mean the team finds what works before competitors running slower cycles finish their first experiment.