Most of your ad budget will go into just a few creatives. The tricky part is knowing which ones are worth scaling. Creative ad testing has become a core part of every high-performing web-to-app strategy. If you don’t have a system for testing and improving your ads, you’ll spend more and learn less. In this article, I’m sharing how I approach testing and scaling creatives, based on the same process I’ve used while running 7-8 figure monthly spends at places like Cash App and Calm. These are practical, repeatable tactics that high-performing growth teams use every day.

How many ad creatives do I need to test for success?

The top 2% of ads now spend ~50% of budget according to AppsFlyer’s creative optimization report. That means 98 of 100 creatives don’t perform well enough to scale or even survive the test. In my experience, it usually takes dozens of tests to find one that really scales. The more concepts you test, the better your chances of hitting something that works and is good for scale.

According to Facebook research, fast-growing companies test 11x more creatives than average. Not just because they can afford it, but because that’s how algorithms work. In 2026, the bar has moved even higher. With platforms like Meta and Google automating targeting through Advantage+ and Performance Max, creative has become the single biggest lever advertisers can pull. Creative velocity — how fast you can produce, test, and iterate on new ad concepts — now matters more than bidding strategies or audience segmentation.

High-velocity teams aim to launch 20–50 creative variations weekly, not just because it combats fatigue, but because it feeds the algorithm the signal diversity it needs to find the right users at the right moments. Creative fatigue, shifting audience behavior, and platform algorithms make ad testing not optional, but fundamental.

What is a good ad testing framework?

Creative testing works best when it runs continuously. A simple loop, repeated often, brings better results than occasional campaigns. We’ve seen the best results when new ads go live every week. Even when current creatives perform well, add fresh ones to the mix. That keeps delivery stable and performance strong. Here’s what makes it work:

- Low-cost production. Modular templates and AI tools help build fast. You don’t need to be perfect.

- High variation. Test new hooks, formats, visuals, and copy. Small changes can shift results.

- Steady output. Regular launches prevent performance drops and reduce fatigue.

- Systematic ad testing. Launch multiple versions and let the audience choose the winner.

Laboratory testing vs. system deployment

One of the most important shifts in 2026 is separating where you learn from where you scale. Creative testing now requires two distinct environments:

- Laboratory testing: Isolate one creative function to validate messaging. Use dedicated test campaigns with controlled budgets. The goal is clarity — understanding what resonates and why.

- System deployment: Consolidate validated top performers into your main campaigns to maximize algorithmic efficiency. The goal is scale — letting the algorithm multiply what you’ve already proven works.

Confuse the two and you optimize noise. Separate them and you train the algorithm with intention. The laboratory shapes the inputs; the system multiplies them. This distinction matters because ad platforms optimize based on early engagement signals. If you mix experimental creatives with proven winners in the same campaign, the algorithm will starve new ads of impressions before they ever get a fair chance.

What are the best performing ad trends in 2026?

Trends come and go. But when you’re testing fast, they open up more room for experimentation.

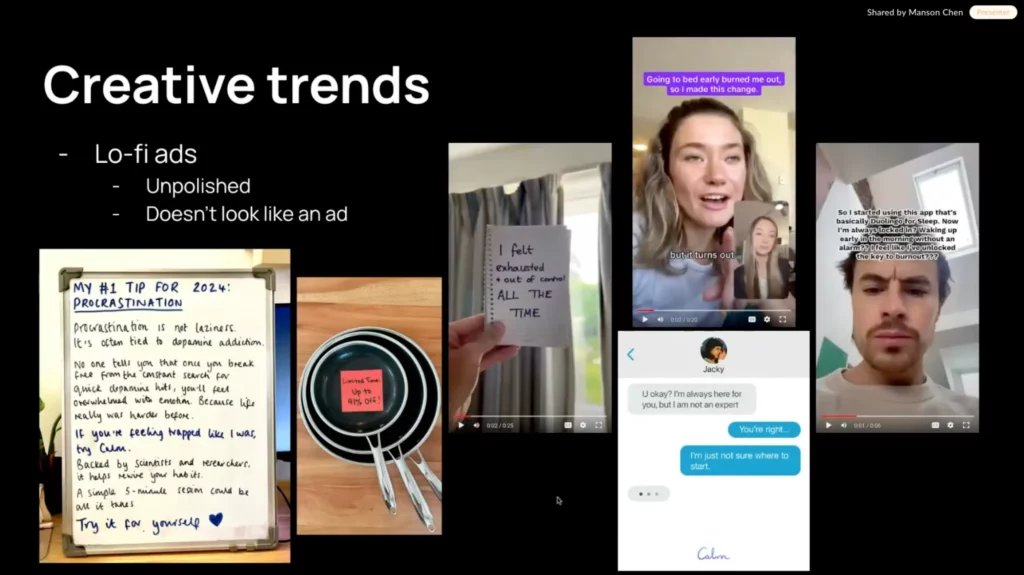

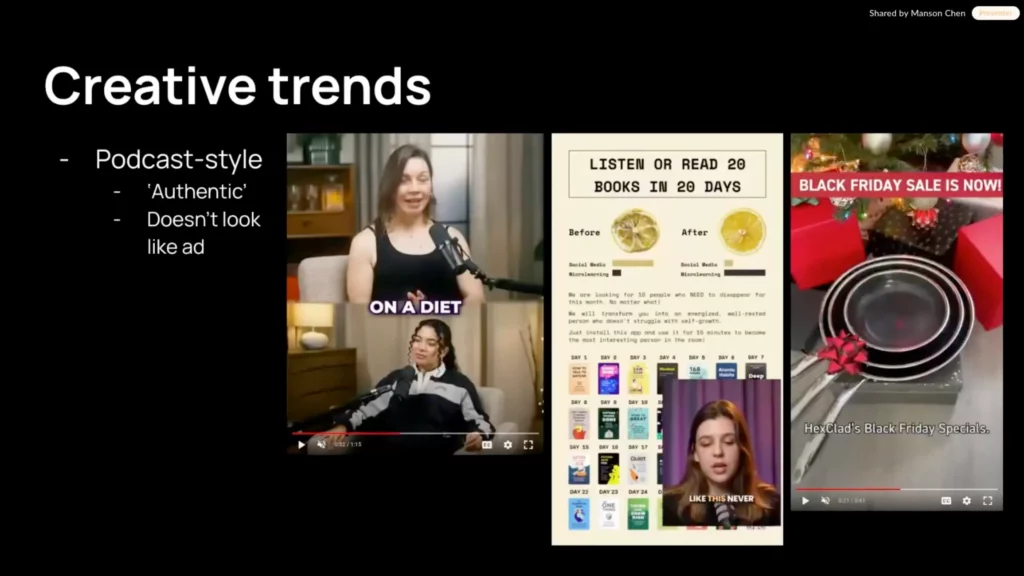

1. Lo-fi statics. Formats like Post-it notes, Slack chats, iMessage threads, and whiteboard scribbles. They look raw and messy on purpose. And that’s exactly the point — they blend into the feed and don’t scream “BUY ME.”

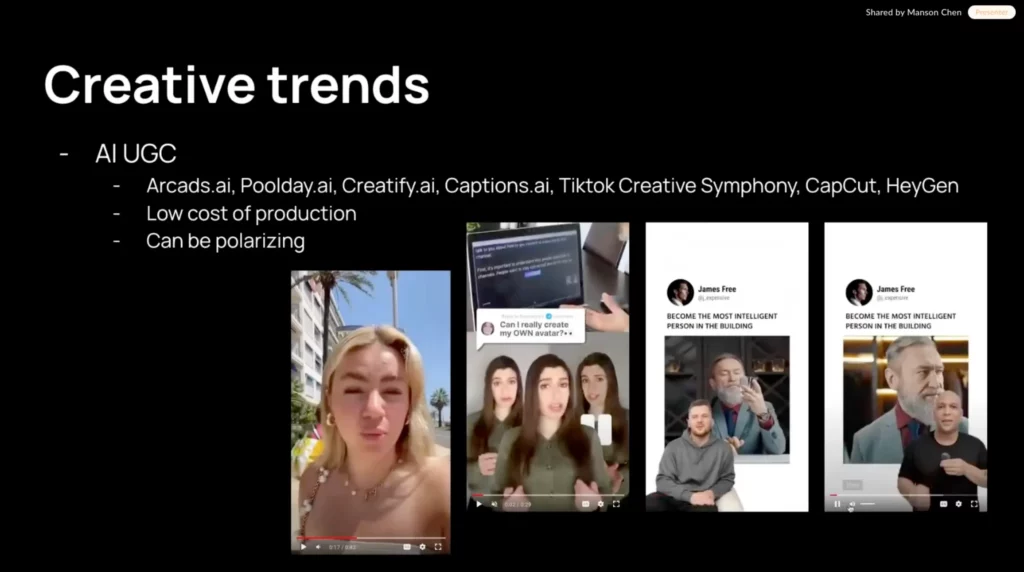

2. AI-generated UGC. Tools like Arc Ads, Koro, and Cify make it easy to spin up testimonial-style videos without actors. Production costs have dropped dramatically, with some brands reporting 60–80% cost reductions when using AI for creative production. That’s cheap enough to run 50 variations and just see what sticks. Even if 9 out of 10 don’t convert, the one that does can carry the spend.

3. AI video generation at scale. This is the biggest shift since the original article. Enterprise AI video adoption grew 127% in 2025, with production timelines collapsing from days to minutes and costs dropping by up to 91%. Tools like Google’s Veo 3 (inside Ads Asset Studio), ByteDance’s Seedance 2.0, and LTX Studio can generate multi-shot commercial sequences — from product reveal to lifestyle shot to call-to-action — directly from a text prompt or product photo. In Q4 2025 alone, advertisers used Google’s Gemini to generate nearly 70 million creative assets inside Performance Max and AI Max campaigns, a 3x year-over-year increase.

4. Podcast-style creative. Voice-over paired with a waveform and some lo-fi visuals. They grab attention without looking like an ad. That’s especially useful for web-to-app flows that rely on storytelling. Feeds are full of noise, but people still stop for voices that feel expert and real.

5. Sensory and “unhinged” creative. A newer trend for 2026 — brands are leaning into maximalist, playful, and even chaotic visual styles. Think bold textures, absurd humor, and content that prioritizes emotional reaction over polish. Adobe’s 2026 Creative Trends Report highlights that audiences increasingly crave content that feels human, tactile, and grounded in real experiences rather than over-produced perfection.

6. Short-form vertical video. In 2026, roughly 90% of Meta inventory is vertical. If your ads aren’t designed for 9×16, you’re leaving CPM efficiency on the table. TikTok, YouTube Shorts, and Instagram Reels have set the standard — snackable 6–15 second clips that deliver impact without dragging on. Some of these are better suited for web-to-app than others. Static lo-fi and podcast-style creatives tend to convert best when paired with native-feeling landing pages.

AI-generated video hooks are great for testing scroll-stopping ideas early in the funnel, especially when you’re trying to fix drop-off points across your web-to-app funnel. You don’t need to chase trends, but knowing them can make your ad testing smarter and more diverse.

AI tools for ad creative production in 2026

The tooling landscape has changed significantly. Here’s an overview of what’s available:

| Tool | Type | Best for |

|---|---|---|

| Google Veo 3 / Nano Banana | AI video + image generation | Google Ads creatives (free in Ads Asset Studio) |

| Seedance 2.0 (ByteDance) | AI video production | Multi-shot commercial sequences from text or image |

| Koro | AI UGC video | High-velocity creative testing |

| Sovran | Video assembly + versioning | Modular ad variation and batch production |

| LTX Studio | End-to-end AI video | Pre-viz to final delivery for teams |

| Midjourney / ChatGPT | AI image generation | Scroll-stopping static concepts |

| Canva | Design + overlays | Quick static variants and simple animations |

The key shift: AI isn’t replacing creative teams — it’s removing production bottlenecks. The teams moving fastest in 2026 understand where AI creates leverage and where human judgment still leads, and build their workflows around that distinction.

How to find the right messaging that resonates with my audience?

Ad testing starts way before you open Figma or hit “record.” Before working on any ad, I look at how people actually talk. Here’s how to find it:

- Start with your app reviews. Export them, drop into ChatGPT, and ask it to pull out 20 concise 5-star ones. These can be turned into static ads: short quotes with high love-of-intent. In one project we tested 50 of them and found 3–5 that consistently performed across video, static, and UGC formats.

- Dig through Reddit. Search for your app, category, or problem space. Look at how real people talk about their needs, frustrations, and habits. These exact phrases often make the best hooks, better than anything copywriters come up with in a vacuum.

- Check post comments and ad replies. See how users react to your own ads (and your competitors’). What are they curious about? What language do they use? A single phrase from a comment can turn into a high-performing angle.

The point is to listen, extract, and test what your audience wants. A good ad speaks the way your users already do.

What does real “creative diversity” look like?

Small changes like tweaking the colors or shuffling the headlines can help, but rarely lead to breakthroughs. To find winning creatives, you need fundamentally different ideas. This is especially critical in 2026. Meta’s Andromeda algorithm now evaluates creatives across multiple dimensions simultaneously — visual structure, narrative logic, hook mechanics, emotional tone, and engagement behavior. If all your ads use the same angle and format, the algorithm picks one and ignores the rest. Creative iteration (changing the hook) is not the same as creative variation (changing the concept). Your ad library should look like a film festival, not a casting call. Start by mapping out your options:

| What you’re exploring | If it’s like this… | Try this instead |

|---|---|---|

| Motivation | Generic benefits like “save time” | Tap into specific pains: “Can’t focus? Try this in your next break” |

| Awareness stage | Selling features to users who barely know the problem | Lead with the problem they do feel — and only then introduce your solution |

| Message | “Best app for X” or “#1 on the App Store” | Use real testimonials, user quotes, or emotional hooks |

| Format | Polished promo video | Lo-fi styles: Post-it notes, text convos, webcam rants, Reddit screenshots |

| Tone | Always polished and formal | Test casual, bold, offbeat, or even annoyed tones |

You don’t need to hit everything at once. But if all your ads use the same angle and format, you’re leaving better ideas unexplored. Real creative diversity means testing different ways of seeing rather than different ways of designing.

How to structure and budget my ad campaign?

Ad testing works best when it’s structured. But the right structure in 2026 looks different from what it did just a year ago, thanks to major platform changes.

What changed with Meta’s Andromeda algorithm

Meta’s Andromeda retrieval algorithm, which rolled out fully in late 2025, fundamentally changed how ads are distributed. It’s 100x faster at matching people to ads and can handle vastly more ad variants in parallel. This means the old Test → Scale approach — with a dedicated testing campaign and separate scale campaigns — no longer works as well. The new reality: consolidated campaign structures win. Advantage+ campaigns can handle much higher creative volume in a single ad set, and ads that do well in testing have been harder to scale successfully out of their original test environment. The algorithm learns which visual elements, narratives, and emotional tones resonate with specific audience segments, showing different creative variations to different users based on engagement patterns.

How to set up your testing structure

- Create a dedicated test campaign — but keep it lean. Keep experiments separate from evergreen. Meta’s built-in Creative Testing feature now lets you test up to five creatives within the same ad set with equal budget distribution and no audience overlap between variants.

- Test concepts, not just variables. The old advice of “1 variable per test” still applies for element-level learning, but in 2026 you should also test whole concepts against each other. One concept expressed through multiple executions (different formats, lengths, hooks serving the same idea) gives the algorithm more signal to work with.

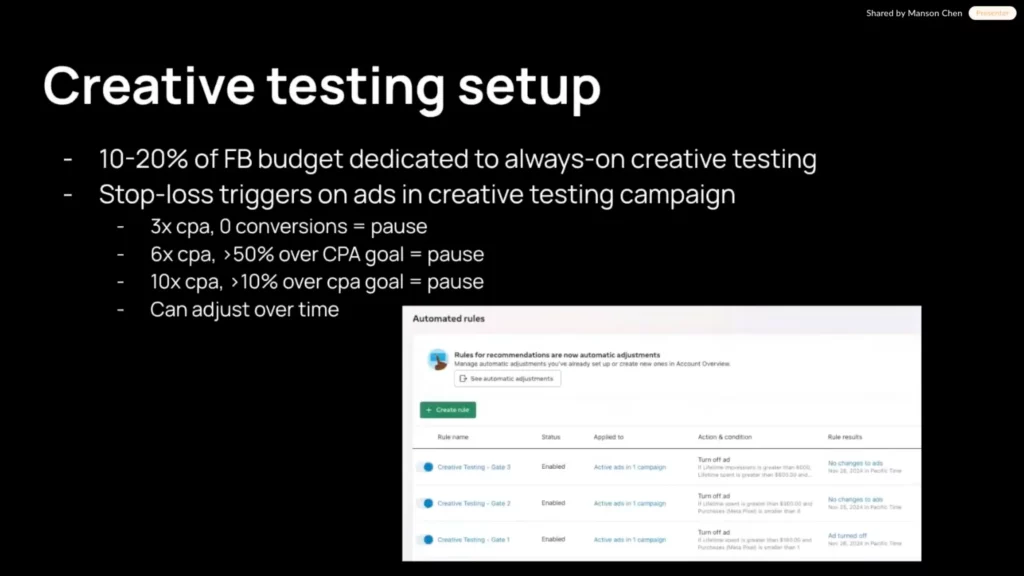

- Budget 10–20% for ad testing. Not just once. Every week. That’s how you stay ahead of fatigue and keep your winners fresh. For each creative variant, aim to spend at least 3–4x your target CPA before making a decision — this ensures statistical significance.

- Use stop-loss triggers. Avoid wasting ad spend on poor performers. Set clear thresholds: no conversions after 3x CPA = pause. CPA exceeds goal by 50% = pause. $X spent with no traction = pause.

- Move winners into evergreen. Once a creative hits your benchmark, feed it into your best-performing ad sets. But in 2026, also repurpose winning messages across new formats: winning video hook → static variation; winning static headline → video hook; winning story → 3-frame carousel.

Old approach vs. 2026 approach

| Dimension | Pre-2026 approach | 2026 approach |

|---|---|---|

| Campaign structure | Separate test + scale campaigns | Consolidated Advantage+ with creative diversity |

| What you test | Individual variables (hook, color) | Whole concepts across formats |

| Key metrics | CTR, CPA | Hook rate, hold rate, CPMr, signal diversity |

| Algorithm’s role | You control delivery | You influence it via creative inputs |

| Creative volume | 5–10 variants per week | 20–50 variants per week |

| Production method | Manual + templates | AI-assisted (video, image, UGC generation) |

How to scale winning ad creatives

Found a creative that works? Now set it up for scale without breaking what made it work.

- Use Post IDs, not duplicates. Duplicating creates a fresh post and resets engagement. Reusing the original Post ID keeps all the likes, comments, and social proof.

- Leverage Meta’s Flexible Ad Format. This feature lets the system mix and match creative variations automatically across placements — a built-in multivariate tester that pairs well with Andromeda’s design. Use it for iterations within a proven concept.

- Broad targeting + cost caps. Let the algorithm explore wide, but cap bids to keep performance in check. Broad audiences usually win long-term. Brands investing in first-party data — email lists, customer lists, loyalty programs — see significantly better campaign performance compared to cold prospecting alone.

- Bid higher for better users. If you’re using Meta’s bid multiplier API, you can bid more for high-LTV groups (like women 35+) without touching your main targeting.

- Monitor CPMr as your early warning system. Cost per 1,000 Reach is the metric that separates scale from stagnation. A rising CPMr means you’re paying to show the same ads to the same people. When CPMr spikes, don’t tweak bids — refresh creative.

- Repurpose across formats. When you find a winner, don’t crank out 20 minor iterations. Instead, take the winning messaging and express it across fundamentally different creative formats. A winning video can become a static, a carousel, and a Reel — each giving the algorithm a new signal dimension.

No need to reinvent the setup. Find what works, give it room to perform, and keep feeding the system fresh creative so fatigue never eats your performance.

What metrics matter most in ad testing

Once you’re testing consistently, the next step is knowing what to watch.

| Metric | How to measure | Good benchmark |

|---|---|---|

| Hook rate | 3-second views ÷ impressions | 30%+ |

| Hold rate | 15-second views ÷ impressions | 15%+ |

| CTR | Link clicks ÷ impressions | ~1% |

| Creative hit rate | Winning creatives ÷ total tested | ~10% |

| CPMr (cost per 1,000 reach) | Spend ÷ (reach / 1,000) | <$20 (healthy); spikes = fatigue warning |

| Creative velocity | New variations launched per week | 20–50 for high-growth brands |

| Statistical significance spend | Spend per variant before decision | 3–4x target CPA per variant |

| Naming consistency | Structured naming for fast filtering (e.g., Format_Hook_Variant) | Helps spot patterns across tests |

A few notes on these metrics for 2026: Hook rate is now the primary signal ad platforms use to decide which ads get more impressions. An ad with a great hook rate but low conversion rate may still dominate delivery — so watch both numbers together. And don’t underestimate CPMr: it tells you when your creative library is running out of steam before CPA does. The goal is to build a system that helps you spot what’s working and double down faster.

How ad testing connects to your web-to-app funnel

Ad creatives are just the top of the funnel. For web-to-app campaigns, what happens after the click matters just as much. Your ad messaging, landing page experience, and paywall need to tell a consistent story. A few things to keep in mind:

- Match the ad to the landing page. If your ad uses a specific pain point or testimonial, the landing page should immediately reinforce that angle. Mismatch between ad and landing page is one of the most common reasons web-to-app funnels break down.

- Test the full path, not just the ad. A creative that drives great CTR but leads to a poorly converting paywall wastes budget. Use paywall A/B testing alongside your ad experiments to optimize the entire journey.

- Measure down-funnel metrics. Don’t stop at CPA or CTR. Track trial starts, paywall conversion rates, and ultimately LTV. A slightly higher CPA creative that attracts users with better retention is worth more than a cheap-click ad that produces churn.

The best web-to-app ad testing setups treat creative, landing page, and onboarding as one integrated system rather than separate optimization silos.

TL;DR: Creative ad testing checklist for 2026

- Test often. Don’t wait for fatigue.

- Separate laboratory testing (learning) from system deployment (scaling).

- Aim for 20–50 creative variations per week if your budget allows.

- Focus on concepts, not just individual elements.

- Use AI tools to cut production costs and multiply output.

- Design for 9×16 vertical — that’s where most inventory lives.

- Let the algorithm vote. Give it diverse creative options.

- Use stop-loss rules: no conversions at 3x CPA = pause.

- Track hook rate, hold rate, and CPMr together.

- Spend 3–4x target CPA per variant before making decisions.

- Move winners to evergreen with Post IDs.

- Repurpose winning messages across new formats.

- Test the full funnel: ad → landing page → paywall.

Final thoughts

Creative ad testing is a key part of steady, scalable growth. It helps you learn faster, spot what resonates, and keep performance strong across your funnel. For web-to-app campaigns, it brings clarity to what actually works, and makes it easier to improve with every launch. I’ve run thousands of video ad creatives over the years, and one thing became painfully clear: producing and testing them at scale is way harder than it should be. Too much time spent tweaking edits, exporting files, and trying to stay organized.

So I built Sovran — a tool that helps marketers assemble, version, and test video ads more efficiently. It saves me hours every week, and I finally spend more time learning what works instead of managing files.

You can check it out here: Sovran.ai

And find me on LinkedIn: Manson Chen